| Physics and Astronomy |

|

Back to top

On this page

Contents PHY2027 Lecture 0Overview This lecture acts as a preface to

the more-formal lectures. The aim is to introduce the main

concepts we will encounter in a less formal and relatively

"non-computer" way. Don't expect to have any answers to the issues we raise here,

these will start to come over the rest of the course.

Rather, the aim is that if you think about these issues now

it will be easier to understand them when we address them

later on.

This week's exercise in the computing lab will enable us to explore these in a fairly fun way before the real hard work begins in the next lecture. People v computersIn this module we will be learning to program computers. Computers are made up of millions of digital logic circuits which behave quite differently from people. Logic circuits flip from high to low voltages very reliably and seldom make mistakes of their own, but they have no "high level" understanding such as inferring our intentions from our instructions, which means they can not recognise mistakes in them. We may sumarise their relative strenghts and weaknesses as follows (where we have put the strengths in bold):

Our experience with computers so farOur experience with computers so far has probably given us a false sense of security as we have interacted with them via applications (also known as "programs") written by other people. Our input consists of high-level commands such as "send this email" which are interpreted by the application which in turn sends a sequence of low-level instructions to the computer's processor to perform the task. These programs are remarkably tolerant of our tendency to make mistakes and at working out what we want them to do even when that isn't what we actually tell them to do, warning us if we have mis-spelled a word or quit with unsaved changes to documents. They're nowhere near as good at this as a fellow human being but then again, they are much better spellers. We can think of this as adding a protective middle layer between us and direct instructions to the computer hardware:

The protective middle layer isn't perfect of course but it makes far fewer mistakes than we do, and is more tolerant of our mistakes than the computer hardware is. Now we are learning to write our own programs we will no longer have this luxury: we are giving instructions directly to the computer and it will do exactly what we say. Which is both good and bad. Computers have no intuition but instead follow simple instructions with great accuracy. The only way to understand them is to "walk through" the logic ourselves. Warm up discussionIf you are reading these notes on your own it is still well-worth thinking about these questions, even if you are just revising the lecture. Consider the following set of instructions:

(For simplicity assume there is no ambiguity about what constitutes a turning and we don't need to worry about roads being closed.) Turn to the person next to you, or form a three if necessary. First, work out where the instructions take you and then discuss the following questions. The questions with answer boxes are particulaly important: fill them in either now or later in your own time.

"Law of averages" errors I have a bad memory of a driver asking me

for directions which I gave starting with something like "Turn

left at the first roundabout" and then remembering just after

he drove off that I should have said the second

roundabout... Imagine you were given a considerably longer set of directions in the style of the ones above without even the address of our destination, perhaps just a house number. We would probably feel rather nervous about our chances of arriving at the right place and would certainly make sure we had the mobile-phone number of the person who gave them to us. Realistically, given a set of twenty or thirty instructions like this, one of the two humans involved, either the person who gave the directions or the person following them, will almost certainly make a mistake. When dealing with people the best instructions to give, and the ones we most like to receive, are those that have confirming information such as "you'll see the Red Lion pub on your left" or "take the second turning on the right onto the A371". Simply writing a long list of instructions and looking at the final result is unlikely to be successful. We are too likely to have made a mistake somewhere along the line and being faced with a list of hundreds or even thousands of instructions knowing that (at least) one contains a mistake but not knowing which one is not a pleasant thought. Instead we should test the results of obeying our instructions along the way to see if they are correct and only proceed with writing the next ones once we are confident of the ones so far. This is easily done with directions, first because we know where we are going and what the roads and landmarks are along the way, and second because the person following the instructions is an intelligent agent who can decide whether the Red Lion is indeed on their left and if not take appropriate action. We make mistakes so sequences of instructions will need periodic tests to check they are correct. Would you drive into a river if your satnav told you to? A taxi driver following his satellite navigating instructions ended up stranded in a river after taking a wrong turning at a ford in the road. The red-faced driver - working for a taxi company called Streamline - drove 200 yards up the River Nar in Norfolk before his eight-seater minibus ground to a halt on the muddy river bed. [ Daily Mail, photo Albanpix.com ]

A woman wrote off her �96,000 Mercedes-Benz and nearly drowned when she followed the instructions of her onboard satellite navigation device into a river... The woman, who identified herself as Hayley, 28, from London, drove her SL500 into the river where it was swept 200m downstream, bouncing from bank to bank. [ The Times, photo Tony Swift published in The Evening Standard ] Measures put in place to stop

sat-nav cliff diversion disaster.

Darlington and Stockton Times The discussion in the previous section reminds us of a rather more subtle problem: We don't expect people to follow our instructions literally. Instead we tell them what we are trying to achieve and (approximately) how to do it. We trust in their common sense to fill in any gaps and if necessary to modify or even disobey our instructions when it's clear that there's a difference between the literal interpretation of the instructions and what we are trying to achieve. And usually they do... Clearly this isn't going to work when programming computers. This more or less covers the situation we have been dealing with for most of our lives: giving instructions to humans, or at the very least computer applications into which humans have put a great deal of effort into making them a user-friendly as possible. In this course we are learning to do something superficially similar but actually quite different: Giving instructions to computersAlgorithms and dataComputer programs are about algorithms and data. An algorithm is unambiguous specification of a set of instructions that can be followed in a mechanical, unthinking, manner to achieve the desired result. Unlike the instructions we give to human beings algorithms do not require, or even allow for, the use of common sense or intelligence. The agent executing the algorithm has absolutely no choice in what steps to take. All the intelligence is in the algorithm. The instructions we discussed at the beginning of this lecture are an example of an algorithm as they do not allow the application of individual intelligence or judgement when executing them. Another example of an algorithmWe are unlikely to ever need to tell a computer to walk to St Davd's station! A simple but more realistic example might be that we are asked to write a computer program to calculate the wages due to an hourly-paid employee given their hourly pay and a set of time-sheets saying how many hours they had worked each day. The specification might be: Specification: an employee's pay is the total number of hours they have worked multiplied by their hourly wage, with Sundays paying double time and Saturdays time and a half. Such a specification is not in itself an algorithm as it is not a sequence of instructions, but it is not hard to come up with one. A typical example might be:

Given such a set of instructions a person of average intelligence could calculate the pay due to each employee. Our algorithm above has several of the features and issues found in just about every computer program. All of these are important and all of which are simple to understand and deal with in a problem of this size. As our program grows in size some of these issues will remain simple and manageable. Others will not. Let's look at a few of these concepts in turn, as they illustrate the major issues we will be covering over the next few weeks. Features of this algorithmDataThe algorithm has terms such as"day-of-the-week " and "hourly-wage" which serve as "place holders", to be replaced by the actual value of that quantity when the algorithm is run, as well as others such as "running-total" which are calculated at the time. Furthermore the value of "running-total" is not a fixed quantity but is updated as the algorithm progresses. Quantities such as running-total, hourly-wage and day-of-the-week are referred to as variables. At the supermarket the till keeps track of the running total of the bill, which changes as items are swiped through. When they ask us for 16.82 at the end it's no use complaining that after they had swiped through the first item the bill was only 50p! Some quantities, such as running-total change their values as the algorithm is executed. In fact, if we look at the description of what we were trying to achieve we will see that it is defined in terms of data: what we are trying to calculate and how that is related to what we already know. Growability factor: large. The key to writing or understanding an algorithm is to understand the data it operates on, and the results it is meant to produce. Sequences of instructions which are performed several times (loops)"For each employee ..." "For each day ...".Almost every program we ever write will contain a number of repeated actions, and the C programming language contains several convenient ways of defining them. The one illustrated here is a common type where a particular variable, in this case the day, has a different value every time the loop is run ("Monday", then "Tuesday", etc). Growability factor: small. If ... otherwise"If the day is a Saturday ..." "otherwise ..."(To save typing, programming languages tend to use the word "else" instead of "otherwise".) These steps, often called as conditionals refer to steps ("multiply that day's pay by two.") which are only performed if a certain condition is met ("If the day is a Sunday"). Algorithms have subsets of instructions that may be executed several times or only if a certain condition true. You may have noticed that our two criteria ("It's Saturday" and "it's Sunday") are mutually exclusive so we could have missed out the word "otherwise" and simply written:

However, the two are not always equivalent,

for example a menu application might say "If the diner is

lactose-intolerant substitute non-dairy ice-cream, if the diner

is gluten-intolerant substitute rice noodles...", in which case

adding "otherwise" to the second and subsequent conditions would

be a mistake.(In our actual Sunday/Saturday

case having the word "otherwise" is clearer which is why we

prefer it.) Notice too that the criterion depends on the values of one of our variables, in this case the day. As the condition is inside a loop ("for each day") it follows that the day variable will sometimes be "Sunday", sometimes "Monday", etc. So our variables are not just used for "obvious" mathematical calculations they also control the behaviour of the algorithm. The criteria that decide whether an instruction is executed or how often a loop is run depend on the values of our variables. Growability factor: medium. Issues with algorithmsRequirements changeWe can illustrate this by noticing that our specification does not account for Bank Holidays (also known as public holidays). This could easily change, either because somebody realised they had forgotten about them, or because of a genuine change in pay agreements after the original specification. Both of these are very common and should be borne in mind when writing our computer program. The revised specification might be: Specification (v1.1): An employee's pay is the total number of hours they have worked multiplied by their hourly wage, with Sundays and Bank Holidays paying double time and non-Bank-Holiday Saturdays time and a half. Note the careful definition: had we said "Sundays and Bank Holidays paying double time and Saturdays time and a half" the question would have arisen: "Do Bank Holiday Saturdays pay time and a half, double time or triple time?". Look out for ambiguities in specifications, they make lawyers rich and programmers make mistakes. The relevant part of our algorithm is now:

Did you notice that the directions we

discussed at the start of the lecture took us the wrong way down

a one-way street? Did you ask which exit we were leaving from?

These are typical "assumptions" problems: the instructions themselves are fine but we have made an assumption about exactly what we are trying to do (go by foot or by car) and precisely where we started from. Notice that missing out the "otherwise" would now cause an error. We will always need to manually "walk though" our algorithm to check for errors. (See below.) A walk through will catch an error if it's a simple oversight.

But suppose we implicitly assumed that all Bank Holidays are

Mondays (as they tend to be in the UK), forgetting about

Christmas and New Year's days. The walk-through wouldn't catch

that as we would think it was correct. Be even more careful about implicit assumptions than you are about ambiguities. Growability factor: medium. Our simple task is part of a larger wholeAlthough we presented this as a standalone task it obviously isn't: in reality: before any employee was actually paid we would need to calculate and deduct their tax. But that didn't really matter - we were able to present this as a separate task without thinking about the complete payroll package. This is a very important principle and directly impacts on the next issue.Growability factor: large. It requires us to think about more than one thing at a timeAlthough our "directions" example is vulnerable to "law of averages" errors, it has one huge simplifying factor: we only have to think about one thing at a time, namely the next direction. Our wages example does not have this, whilst calculating the day's salary we have to think about two or three things at a time (hourly wage, hours worked and the day).Growability factor: "Look out, I think it's coming this way!!". ExerciseIn pairs or threes play multiplication ping-pong: one person says two one-digit numbers ("seven times two"), the other person says the answer and asks one back ("fourteen. Four times eight"). It's not a race but try to establish what the comfortable speed is. Then up it to a one-digit and a two-digit number ("four times twenty-seven"), after few of those go up to two two-digit numbers, then two-digit time three-digit... How would you describe the shape of the graph of difficulty plotted against complexity? (I.e., how quickly does it get more difficult as the number of digits increases?)

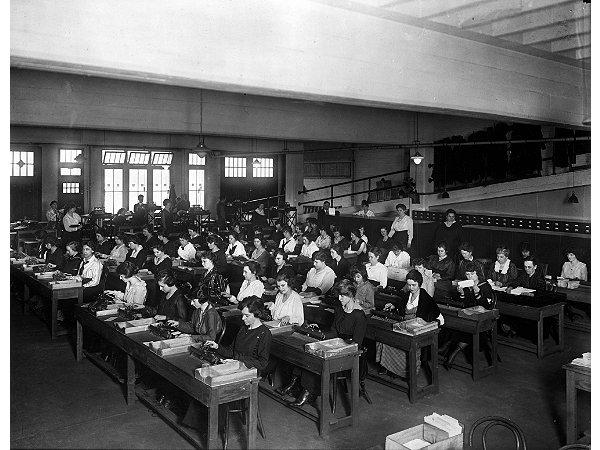

This is the single biggest issue we will face and we shall be thinking about strategies to deal with this over the next few weeks. Other issues with algorithms"Canned" instructions that can be used several timesOur initial example, going from the Physics Building to St David's station, was fine as a one-off. But as mentioned above, iIn real life we have a mental list of places we know how to get to ("the High Street", "the M5", "Crediton") to act as common first stages (the directions from Exeter to a lot of places start "take the M5 north..."). Without this collection, or library, of "sub-routes" we would find it very hard to remember how to get anywhere. This also fits in with our "testing" idea: it's relatively easy to check a short route is correct and once that's done we can use it again and again. Less obviously, but very importantly, routes can change due to new road layouts and we instinctively think of this in changes to canges to one route ("getting to the M5") rather than a large number of routes which all start the same ("getting to Bristol", "getting to Birmingham", "getting to my brother's house"). In practice all algorithms of any complexity can only be made manageable by this process of divide and rule. Long sequences of instructions will need to be broken down into independent chunks each with their own tests to check they are correct. Handling ErrorsFinally, one major aspect of programming this example does not illustrate is the handing of errors ("I can't find Fred Smith's time sheets!"). We will start to address this later on in the module. Layout and "white space"One factor that's so obvious that you probably didn't notice it is that when we displayed the algorithm we automatically indented the levels of bullet points to reflect the structure of the algorithm. Bullet points are always displayed this way of course, and so are computer programs and for the same reason: it makes them much easier to understand. The "rules" for using so-called "white space" to enhance readability go back to the beginning of print (and maybe before?) and also include using blank lines to indicate divisions and subdivisions. White space has no effect on the meaning of the algorithm but when used correctly makes it easier for us to understand (and when used incorrectly, harder to understand!). Walking through our algorithmsTo execute our initial "getting to St David's" example we would simply travel down the route. Of course we didn't actually have to do this to work out where it led to: mentally we (almost literally) walked through the algorithm and kept track of our location and direction of travel. These two pieces of information, together with the next instruction to be executed, told us all we needed to know and enabled us to work out what the algorithm did. If we wished to visualise this to somebody else we might have a map with a little arrow showing were we were and watch the little arrow moving as we follow the instructions. For our our more-realistic "wages" example what we need to keep track of is not our physical position but the current value of the hourly rate, the running total etc, ie the values of our variables. These are the equivalent of our "location and direction of travel" in the previous example and to visualise the current state of our algorithm and to see what effect it has we just need to show the value of its variables. The main question we need to ask about a short section of our code is "what happens to the values of our variables?". Stepping through our algorithmsEverything the computer does is incredibly simple and to understand a piece of code we must be able to work out what we would do if we were to be executing it ourselves. A bit of an aside: the original, human, computersAmazing as it may seem today the original "computers" were actually human beings equipped with a set of instructions (the algorithm), a mechanical calculating machine and some cards for recording the results. Usually, of course, there would be a large number of intermediate results which the computer would write on a card and s/he would then read the number off the card later on in the calculation.  A room of computers from around the 1920s. Note the woman in the fourth row reading instructions and/or data from a card. Image: United States Library of Congress. These computers would easily be capable of running the small pieces of computer program we will be dealing with in the next few lessons. The computer isn't doing anything they couldn't do, it's just doing it really, really fast and with an enormous amount of memory. The human computerWhen evaluating an algorithm such as our "wages" example we can imagine ourselves as a "human computer" equipped with:

We execute the program steps by performing the calculations and and writing the resulting variable values on small yellow index cards, writing in pencil (so the value can be changed) with one variable value one per card. We might decide to use card 200 to store the hourly wage, card 208 to store the hours worked, etc. When a variable's value changes we rub out the old value on the index card and use the pencil to write in the new one. When we need to know the value of a variable we just read it off the card. It's not important at this stage but of course, this requires that we can remember which variable value is written on each card. We could just have a piece of paper that says "hourly-wage on card 200" etc but after a little while we would probably realise we could save constantly referring to our piece of paper by just writing the card number next to each occurence of the variable nme in our program. We could even write a version of our program that replaces all the occurences of hourly-wage by @200 (meaning the number on card 200), and replaces hours-worked by @208 etc. It may seem extremely strange that we can model such as complicated thing as a computer so simply. But we can do this because the computer is designed to hide its complexity from us and to take very simple instructions and make them happen very quickly without giving any indication that the underlying complexity even exists. . For reasons that will become very important in

the latter half of this course we will assume that the cards are

individually numbered and arranged in numerical order, to help

us find the one we want, and that we say to ourselves something

like "I will store the value of variable x on card 12". This is actually a remarkably accurate model of how

the computer actually works and we shall use it for

the next few lessons. (NB: it is the fact that the cards are numbered that makes this a good model of

how the computer behaves. If you are wondering why ask yourself

"how does an actual computer specify where it is storing the

value of a variable?") We will see this simple model "in action" on very short

computer programs in the next few lessons. By analogy with a house number, the variable's index-card number is called the "address" of that variable as it not only identifies it but also tells us where to find it. To be absolutely clear: we never need to know the actual value of a variable's address, but we do need to understand the concept. We shall see later in the course how we can make use of this when we come to consider, amongst other things, vectors and arrays. In the following lessons we will illustrate stepping through very short pieces of computer programs showing what happens to the values of the variables and how they are used. To think about after the lectureOver the next few weeks, in addition to learning the mechanics of the C programming language, we will start to address the following points:

SummaryThe text of each key point is a link to the place in the web page. People v computers

Algorithms and data

After this lecture you should be able to...

| ||||||||||||||||||||||||||||||||||||||||||||||||||||||||